The Paper Cup Theory of Espionage

The CIA just published something remarkable, an admission that the future of spying looks a lot like the past.

In the March 2026 edition of Studies in Intelligence, the Agency’s internal journal, portions of which are declassified for public consumption, a former case officer named Thomas Mulligan makes an argument that inverts everything you’ve heard about artificial intelligence rendering human work obsolete. He argues that as AI becomes more sophisticated, the oldest techniques in the intelligence trade become more valuable, not less. Dead drops. Brush passes. Face-to-face meetings. The tradecraft that existed before electricity, now returning as cutting-edge methodology.

The Marginal Advantage Problem

Mulligan’s core insight is about what happens when sophisticated capabilities become cheap and widespread. High-resolution satellite imagery that once required billions in government infrastructure now costs hundreds of dollars from commercial providers. AI can process surveillance footage that previously required teams of analysts working for months. The problem isn’t that technical intelligence becomes worthless. It’s that when everyone has it, nobody gains an edge from it anymore.

His unexpected analogy is horse racing betting markets. In the 1980s, professional gamblers with statistical models and computing power crushed casual bettors. Within a generation, those tools became so accessible that the advantage disappeared. The edge migrated back to qualitative judgment, the kind of insight that comes from a well-placed human source who knows which general is actually making decisions, or which weapons program is genuine versus a budget decoy. Mulligan notes that certain intelligence can likely never be captured by AI, including information in air-gapped systems, leadership intentions, and the location of “off-switches” designed to control rogue AI (which would be hidden from the AI itself).

Signal and Noise

The second dynamic is information pollution. Mulligan describes a theoretical “fog of war machine” that floods environments with AI-generated disinformation, including fake phone calls, synthetic documents, and fabricated video evidence. An adversary could use AI to generate ten times as many plausible-but-false communications as real ones, rendering signals intelligence useless or worse. Deepfake technology has already reached the point where criminals stole $25 million from a Hong Kong firm whose finance worker believed he was video-calling the CFO.

He warns that fabricators, intelligence sources who provide false information, can now use locally installed language models to generate unlimited plausible reports, fine-tuned on real organizational details. In such conditions, a human source who can identify which signals are genuine becomes invaluable.

When Technology Eats Itself

The most interesting argument reveals how technology can undermine itself. AI-powered surveillance is making electronic espionage increasingly dangerous. Mulligan describes autonomous drone swarms and nano-drones small enough to follow case officers into buildings undetected. Counterintelligence services are deploying facial recognition to identify “operational acts” like brush passes.

But here’s the paradox. AI makes it trivially easy to generate convincing deepfakes of anyone, meaning electronic communications become inherently low-trust. If you receive an email or video message from your intelligence contact, you cannot be certain it actually came from them. But if your contact hands you a physical object in person, or leaves it in a pre-arranged hiding spot you can verify wasn’t surveilled, you have much higher confidence.

The Limits of Omniscience

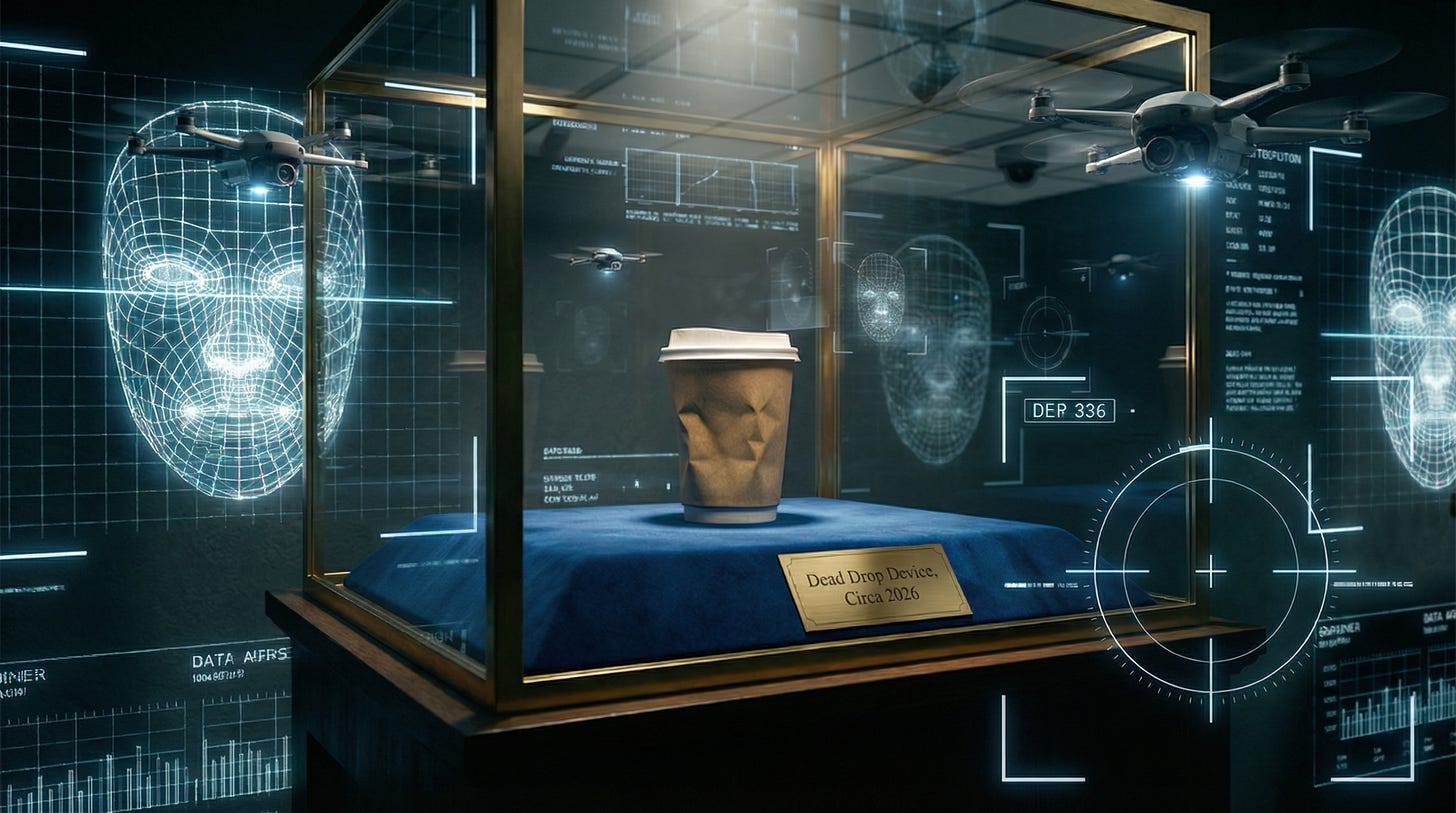

Which brings us to the paper cup. Mulligan uses this as his example of AI surveillance limits. “Every day, in every city, scores of people casually toss paper cups on the ground. Nearly all of them are litterers. Occasionally, one is a case officer conducting a dead drop. It’s not clear how any surveillance system, AI-powered or otherwise, could discriminate between the two.”

While AI excels at pattern recognition, certain operational acts are designed to be indistinguishable from mundane civilian behavior. A memory card glued inside a disposable cup and tossed near trash looks exactly like littering. These techniques survive because they exploit the fundamental problem of false positives. Unless you know in advance someone is an intelligence officer, you cannot identify which of their thousands of daily micro-behaviors constitute espionage.

The CIA doesn’t publish this journal to entertain outsiders. The decision to declassify this particular article suggests the Agency wants its people focusing on what remains scarce at a time when technical superiority is becoming democratized. That would be trusted human relationships that can filter truth from an ocean of synthetic information.

In a world of ubiquitous sensors and algorithmic omniscience, the most sophisticated espionage technique might be the one that looks most like mundane human carelessness.

Just don't try this in Singapore!