The Prophecy in the Gaps: What Mendeleev's Table Reveals About How We Know Things

Mendeleev predicted elements no one had seen and turned out correct. How it became authoritative reveals something deeper about what changed between his era and ours.

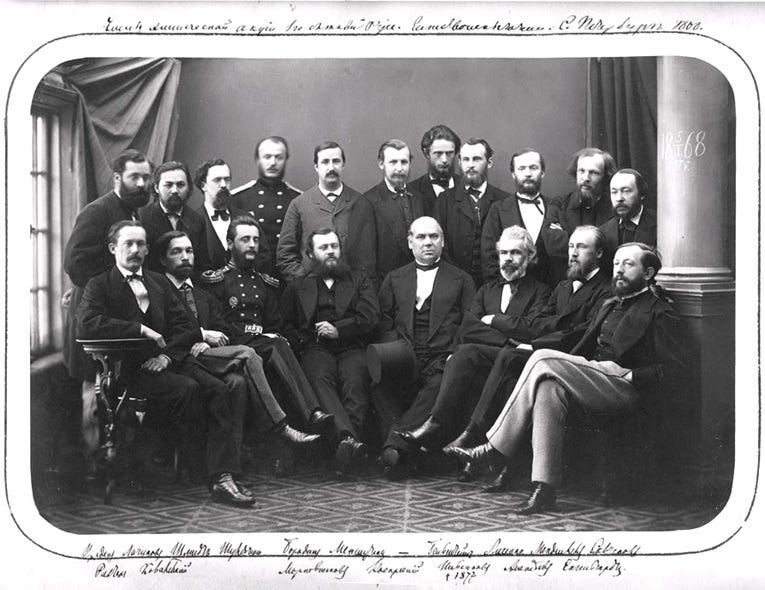

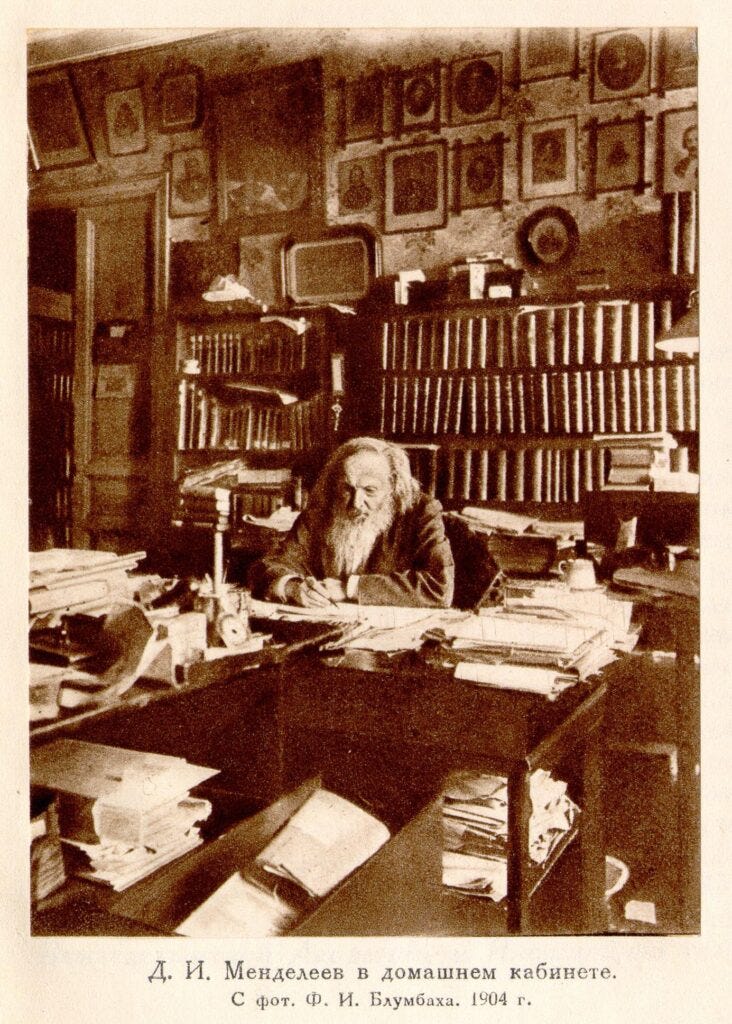

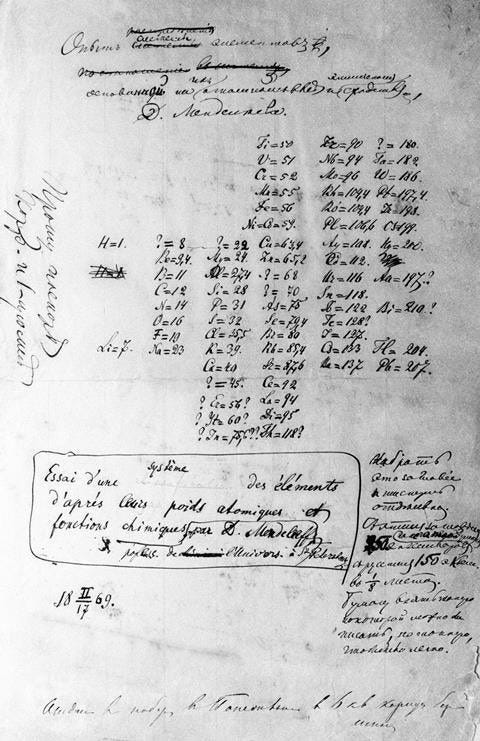

157 years ago today, on March 1, 1869, Dmitri Mendeleev sent a manuscript to the printer in St. Petersburg. The document proposed an arrangement of chemical elements into rows and columns. His design of the periodic table didn’t just organize what was known, it predicted what wasn’t. He left gaps for elements no one had seen, specifying their atomic weights and chemical properties with bizarre precision.

When gallium turned up in 1875, it matched his predictions almost exactly. Germanium followed in 1886, then scandium. The table worked because Mendeleev had discovered genuine patterns in physical reality, and because the scientific community had mechanisms to test his claims. Within two decades, his framework became foundational.

In that older posture, truth was less a feeling than a discipline. Claims did not become authoritative because they were vivid or widely repeated, they became authoritative by surviving contact with a shared method. Knowledge was arranged, named, and revised inside structures that made room for ignorance and made error legible, which is another way of saying they made correction possible. The periodic table is a small monument to that arrangement, an ordered wager that reality has a grammar, that patterns can be proposed without pretending to be complete, and that the gaps matter precisely because they place the burden of proof on the world rather than on persuasion.

The Invisible Architecture

Mendeleev’s table didn’t become authoritative because it went viral. It became authoritative because it worked, and because a specific institutional ecosystem existed to test whether it worked. Peer review emerged from the Royal Society in the 1660s, universities formalized research standards, journals created mechanisms for replication. By the mid-19th century, these institutions didn’t just publish findings, they filtered them. This wasn’t a popularity contest or engagement optimization, it was evaluation by practitioners who had spent years mastering the same technical domain. The society served as a filter, but one calibrated to epistemic standards rather than emotional resonance. The gap between alchemy and chemistry wasn’t knowledge, it was institutional architecture.

That architecture rested on a simple mechanism. Claims had to survive multiple challenges, peer scrutiny before publication, replication by competing labs, and integration into existing theoretical frameworks. The process was slow, often frustrating, but it produced knowledge that held up when tested by people who wanted it to fail.

The system worked well enough that public trust became almost reflexive. When scientists said smoking caused cancer or CFCs damaged the ozone layer, most people eventually believed them, not because they understood the underlying chemistry, but because the institutional process had earned credibility through demonstrated predictive power.

Then something broke.

Friction as Feature, Not Bug

The institutional structure of 19th-century science imposed costs that seem almost archaic now. Publishing required navigating editorial boards, peer review processes, and space constraints in physical journals. Attending scientific society meetings meant traveling to specific cities on specific dates. Replicating experiments required access to laboratories, equipment, and materials.

Institutional friction worked through layers. Scientific societies created communities of practice where shared technical knowledge allowed for sophisticated evaluation. Journal publication exposed claims to distributed scrutiny from practitioners across different locations. Replication requirements meant that experimental results needed to be robust enough to survive in other people’s hands, with different equipment, under different conditions.

Most importantly, the system created time for error correction. When Mendeleev first published his table, he made mistakes, he misplaced some elements and predicted several that don’t exist. The slow pace of institutional communication meant these errors could be identified and corrected through correspondence, revised publications, and subsequent research before they became entrenched as dogma. The friction that slowed adoption of correct ideas also slowed adoption of incorrect ones.

The Predictive Gap

Mendeleev’s table was valuable precisely because it was vulnerable: it made specific claims about unobserved phenomena that could definitively prove it wrong. When those predictions succeeded, confidence in the framework was justified by something external to the social system that had elevated it.

The 19th-century institutional system, for all its flaws, created a rough correspondence between what society treated as authoritative knowledge and what had survived meaningful tests against reality. That correspondence was never perfect, scientific racism, eugenics, and numerous other horrors achieved institutional backing despite failing genuine epistemic standards. But the system at least contained mechanisms for eventual correction when predictions failed.

Viral ideas can remain culturally influential indefinitely regardless of predictive failure because the metrics that determine visibility have no connection to external validation.

Living in Two Systems

Academic journals still use peer review, replicate Mendeleev’s institutional structure, and filter claims through domain expertise. But alongside that slow, friction-laden process runs an information ecosystem where claims can achieve mass distribution in hours, face no requirement to make testable predictions, and optimize for engagement rather than accuracy.

Ideas with strong institutional backing but weak viral potential remain confined to specialist communities. Ideas with weak epistemic foundations but strong emotional resonance achieve broad cultural penetration. The two spaces rarely communicate effectively, and when they do, the institutional voice often arrives too late and with insufficient emotional force to compete with what algorithms have already amplified.

This creates a challenge that Mendeleev never faced: establishing truth in an environment where the mechanisms for testing claims operate on completely different timescales and evaluate completely different criteria than the mechanisms for distributing claims? His system was integrated, the same institutions that evaluated also amplified.

The periodic table succeeded not because Mendeleev was eloquent or because his theory felt intuitively satisfying. It succeeded because it worked. It predicted things no one had seen, and then those things appeared exactly as described. That kind of success—external, verifiable, exposed to refutation—was once non-negotiable.

Distribution does not demand it.