What Comes After We Automate Thought?

The Stone Age externalized force. The Steam externalized energy. Information externalized memory. What happens when we externalize thought itself?

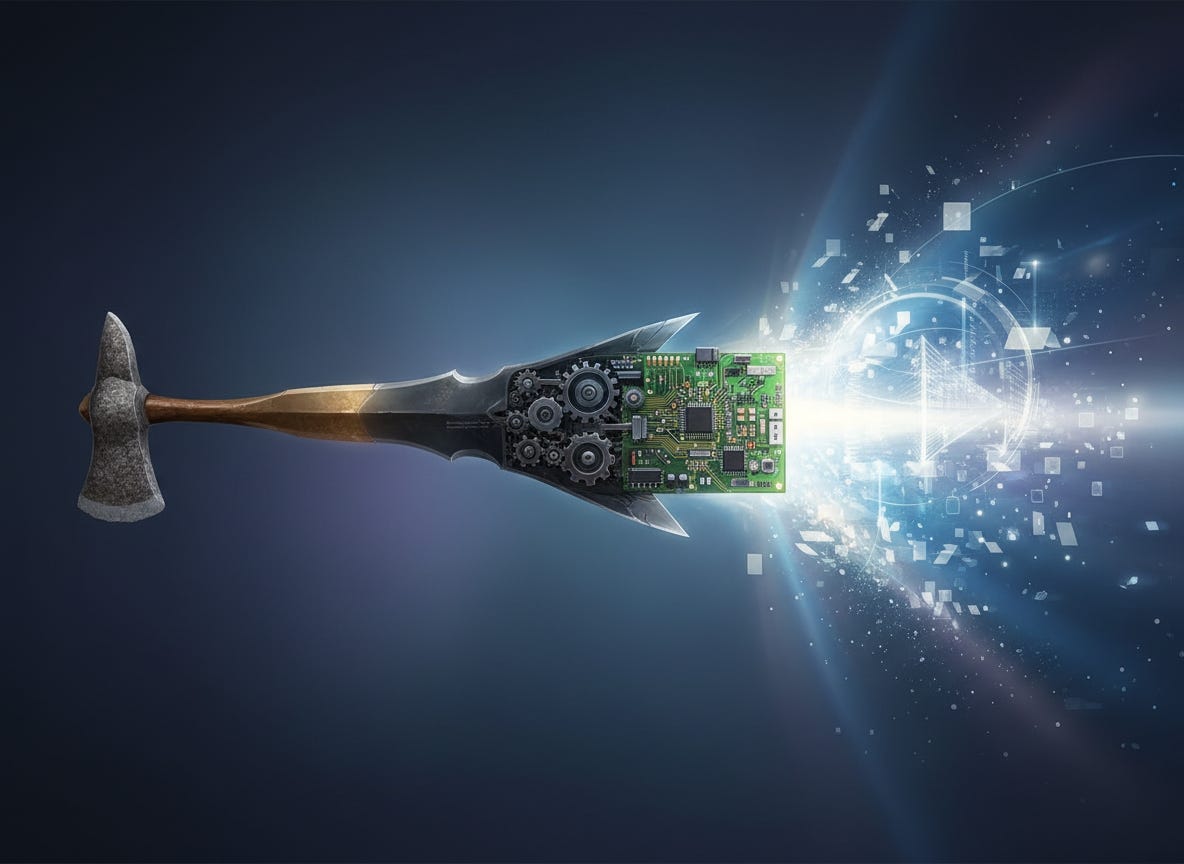

Stand in a museum and trace the ages arc. Stone hand axes in the first display case, their edges still sharp after 300,000 years. Bronze blades in the next. Iron plows. Steam engines. Vacuum tubes. Microchips. Each artifact marks an age, each age defined by the dominant tool that structured civilization around it.

The standard narrative treats this as a steady accumulation of capabilities—humans getting better and better at bending the world to our will. But zoom out far enough, and a different pattern emerges: a progression not of mastery, but of externalization. Each age represents another human capacity we’ve managed to outsource to the environment around us.

The Stone Age through the Iron Age were about externalizing physical force. We took the work our muscles did—cutting, pounding, shaping—and embedded it into objects that did it better. The Industrial Age externalized energy transformation itself, replacing human and animal power with steam and combustion. The Information Age externalized memory and communication, freeing us from the limitations of what we could hold in our heads or transmit through speech.

Now we’re externalizing cognition. And I’ve been trying to understand whether this follows the same pattern as previous transitions, or whether we’ve reached something qualitatively different.

The Mechanics of Offloading

Psychologists have a term for what we’re describing: cognitive offloading. It’s the human tendency to use external resources to reduce mental effort. Writing a shopping list rather than memorizing items. Using GPS instead of learning routes. Setting phone reminders instead of keeping schedules in your head.

This isn’t a modern phenomenon or a sign of declining mental capacity—it’s what humans have always done. The difference is scale and speed. Writing systems emerged around 3200 BCE, fundamentally changing how societies stored and transmitted knowledge. But it took millennia for literacy to become widespread. The printing press accelerated the process in the 1440s, yet universal literacy remained centuries away in most places.

With AI, we’re compressing that entire trajectory into a single generation. Today’s college students learned to research using Google; they’ll enter workplaces where AI systems draft the initial analysis. The externalization that once took societies generations to absorb now happens within individual lifetimes.

Here’s what’s genuinely strange about this moment: we’re offloading the very capacity we used to decide what to offload. Previous externalizations were tools that extended human intention—a hammer extends your arm, a car extends your legs, a calculator extends your arithmetic ability. But you still decided when and how to swing the hammer, where to drive, which numbers to multiply.

Large language models and AI systems don’t just extend thought; they increasingly substitute for it. Ask ChatGPT to draft an analysis, and you’re not extending your analytical capacity—you’re delegating the analysis itself, then deciding whether to accept the output. The locus of cognitive work has shifted.

What Gets Lost in Translation

Stanford researchers Benoît Monin and Erik Santoro discovered something telling when they studied how people respond to AI advancement. After reading about AI capabilities, test subjects consistently rated distinctively human attributes—personality, morality, relationships—as more essential to human nature than control groups did.

The pattern suggests a kind of defensive repositioning. As AI claims more of the cognitive territory we thought was uniquely ours, we retreat to the remaining high ground and declare that to be what really mattered all along. Logic and reasoning seemed essential to human identity when animals couldn’t do them; now that machines can, we emphasize emotional intelligence and interpersonal warmth instead.

This isn’t necessarily wrong—those capacities are valuable, perhaps more valuable than we previously recognized. But the goalpost-moving reveals an uncomfortable truth: we don’t actually have a stable definition of what makes humans distinctive. Each technological age forces a renegotiation.

Shannon Vallor, a philosopher who worked as an AI ethicist at Google, argues that what distinguishes humans isn’t any particular capability but the struggle to cultivate virtue. Being loving, honest, or courageous isn’t something you achieve once, like passing a test. It requires navigating the world with particular priorities in mind while constantly asking what you should do, how, and why. “This struggle is the root of existentialist philosophy,” she writes. “At each moment we must choose to exist in a particular way.”

An AI can generate text about love without having the capacity to love. It can produce creative work without experiencing the “painful reimagining of the self” that characterizes actual human creativity. The difference matters—but it’s a difference of depth and experience, not of output quality. And in an economy organized around outputs rather than processes, that distinction may not hold much weight.